The boundary between human creativity and machine precision has dissolved, ushering in a new era where the symphonies of tomorrow are composed through a dialogue between data and soul.

The Architecture of Sound: From Text to Track

The most significant shift in the 2026 landscape is the maturation of Generative Audio. We have moved far beyond simple melodies. Today, music is sculpted through descriptive intent. Users no longer settle for generic mood tags. Instead, they leverage technical prompts to define the sonic texture.

-

Precision Prompting: Creators use high-fidelity descriptors such as 120 BPM Synth-Pop with side-chained pads and crystalline arpeggios to build complex arrangements from scratch.

This "Hybrid Approach" has become the industry standard. Professional musicians use AI to generate the foundational "base" of a track, the intricate percussion or atmospheric layers, while overlaying live vocals and traditional instrumentation to maintain the "human touch."

Sonic Autopsy: Advanced Features & Remix Culture

Artificial Intelligence has unlocked the internal DNA of audio files. With modern Stem Separation, any legacy recording can be dissected into isolated components, drums, vocals, and bass, with mathematical perfection.

-

Voice Conversion: Record a simple melody and transform it into the timbre of an opera singer or a global pop icon via licensed AI voice models.

-

Infinite Iteration: The "Perfect Chorus" is no longer a stroke of luck. AI tools can now generate ten distinct variations of a specific section instantly, allowing for rapid creative prototyping.

Tools like Suno, Udio, and Google’s Lyria are leading this charge, offering everything from one-click song generation to high-quality instrumental transitions that are indistinguishable from studio recordings.

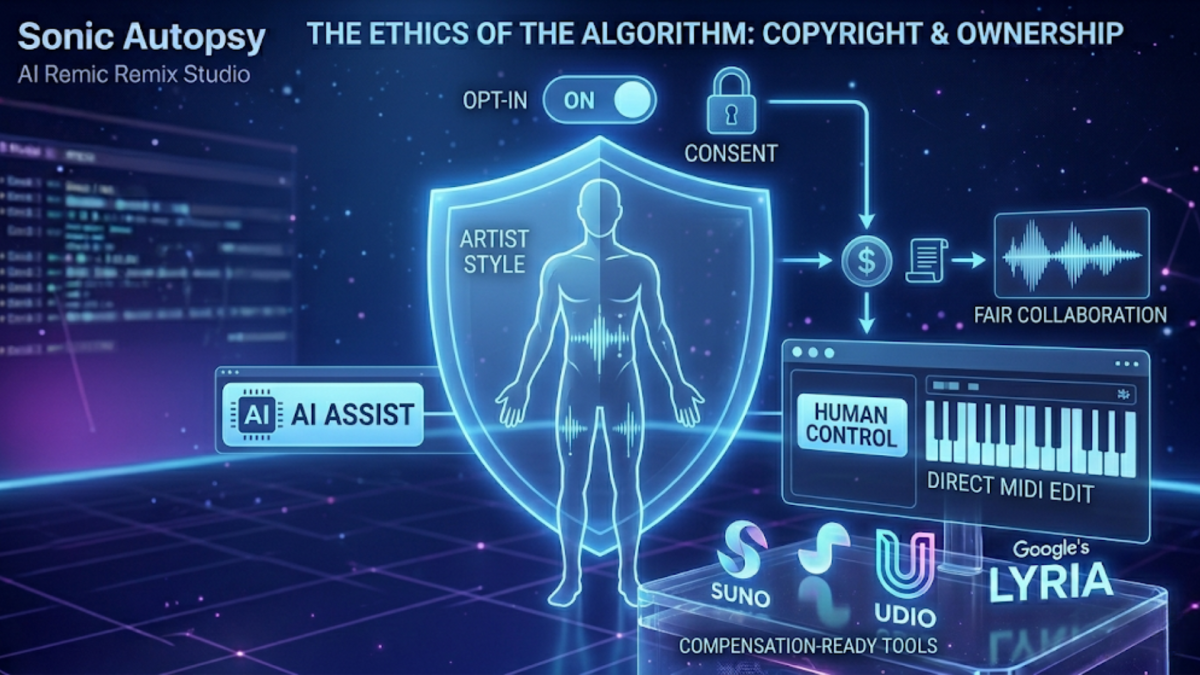

The Ethics of the Algorithm: Copyright & Ownership

As technology advances, so must the legal framework. 2026 marks the rise of the "Opt-in" Model. The industry is pivoting toward a system where artists are compensated whenever their unique style or vocal signature is used to train a model.

This ensures that while AI democratizes music creation, the original architects of sound are protected and paid. Platforms like AIVA and Soundraw emphasize this balance by allowing creators to edit MIDI notes directly, ensuring the final output is a collaboration rather than a replacement.

Conclusion: A New Harmony

The AI Music Revolution is not about the end of the musician; it is about the expansion of the instrument. From pumping side-chain effects that give a track its "heartbeat" to supersaw synths that provide a cinematic scale, AI provides the tools, but humans provide the vision. As we look forward, the intersection of technology and art promises a world where the only limit to a masterpiece is the depth of the creator's imagination.